Noah here. Lots of you have likely heard of the “Turing Test,” a thought experiment described by pioneering computer scientist Alan Turing in a 1950 paper titled “Computing Machinery And Intelligence.” In trying to ascertain how you can tell if a machine is thinking, he proposes a game:

It is played with three people, a man (A), a woman (B), and an interrogator (C) who may be of either sex. The interrogator stays in a room apart from the other two. The object of the game for the interrogator is to determine which of the other two is the man and which is the woman [without being able to see them or hear their voice]. He knows them by labels X and Y, and at the end of the game he says either ‘X is A and Y is B’ or ‘X is B and Y is A’.

Turing goes on to suggest that you could replace the man with a machine and see if it could fool the interrogator. This has come to be popularly known as “the Turing test” and is considered a fun test of a certain kind of machine intelligence. A version of the test happens each year as part of the Loebner prize, wherein teams compete to write programs that convince humans they’re not human. Lots of people think the whole thing is stupid and most certainly not a test of machine intelligence.

Why is this interesting?

One of the reasons the competition isn’t viewed as an accurate measure of artificial intelligence is that building a bot that can trick a human is a very narrow objective. What’s more, chatbots have been tricking humans for a long time now by tapping into some pretty basic patterns of conversation. In 1966, Joseph Weizenbaum wrote the first chatbot—a program called ELIZA:

The gross procedure of the program is quite simple; the text is read and inspected for the presence of a keyword. If such a word is found, the sentence is transformed according to a rule associated with the keyword, if not a content-free remark or, under certain conditions, an earlier transformation is retrieved. The text so computed or retrieved is then printed out.

His first script was based on a Rogerian psychotherapist, which he explained was “relatively easy to imitate because much of his technique consists of drawing his patient out by reflecting the patient's statements back to him.” Here’s a snippet of conversation from his original 1966 paper (capitals are the computer’s response):

It's true. I am unhappy.

DO YOU THINK COMING HERE WILL HELP YOU NOT TO BE UNHAPPY

I need some help, that much seems certain.

WHAT WOULD IT MEAN TO YOU IF YOU GOT SOME HELP

Perhaps I could learn to get along with my mother.

TELL ME MORE ABOUT YOUR FAMILY

My mother takes care of me.

WHO ELSE IN YOUR FAMILY TAKES CARE OF YOU

My father.

YOUR FATHER

You are like my father in some ways.

WHAT RESEMBLANCE DO YOU SEE

You are not very aggressive but I think you don't want me to notice that.

WHAT MAKE YOU THINK I AM NOT VERY AGGRESSIVE

You don't argue with me.

WHY DO YOU THINK DON'T ARGUE WITH YOU

You are afraid of me.

DOES IT PLEASE YOU TO BELIEVE AM AFRAID OF YOU

The trick, of course, is that the computer has no memory of what was previously said: it’s just constantly reacting and replaying the last statement. While at first this seems impossibly obvious, it’s interesting to think about when real-life conversations verge in this direction. Arguments, in particular, tend to be stateless in this way—when things get especially bad, both parties lose track of any shared history and just volley reactions back and forth.

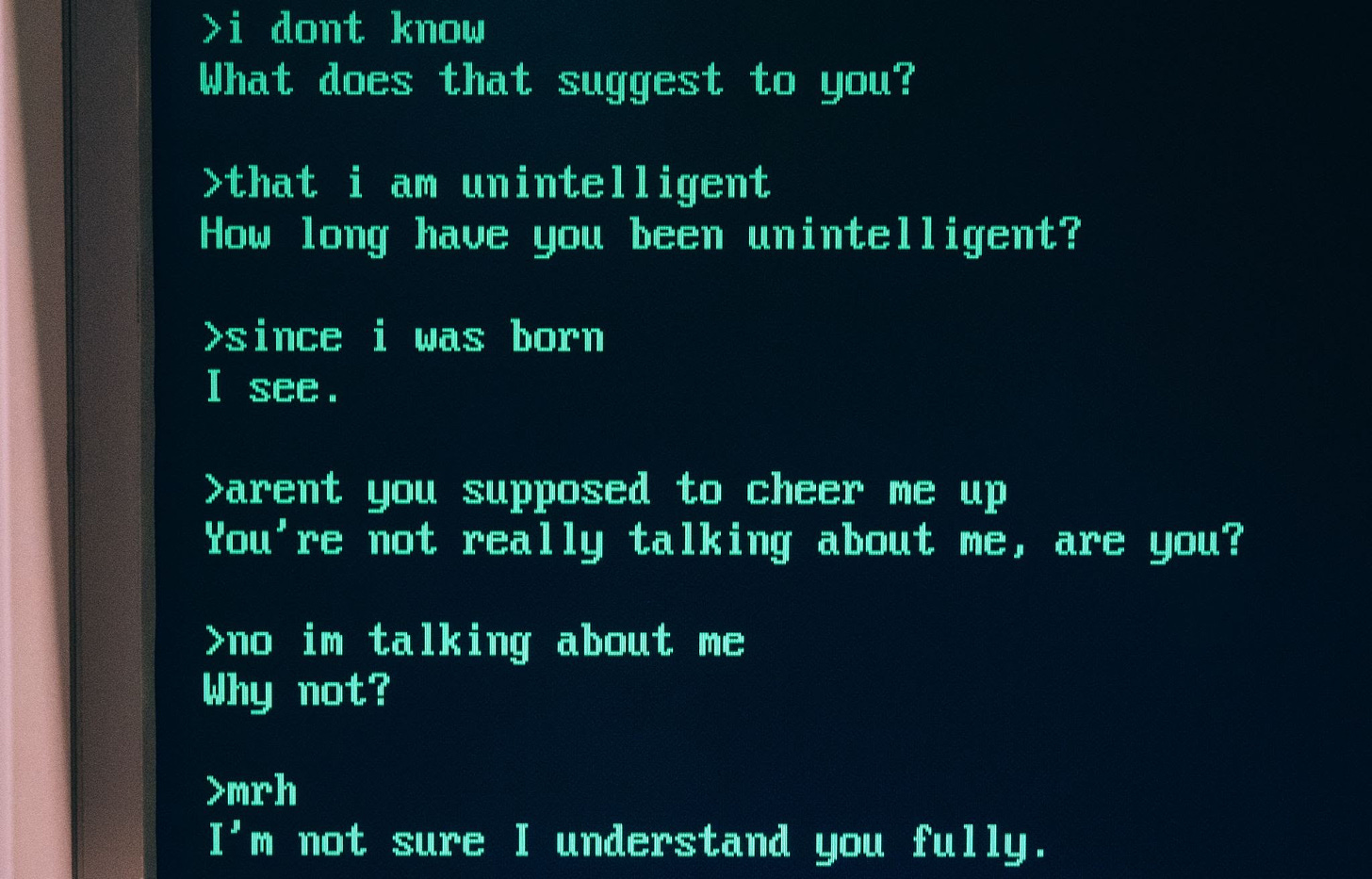

To that point, another famous chatbot is 1989’s Mgonz from Mark Humphreys. Rather than going for a caring conversationalist, Humphreys went with a harsher approach. Here’s a bit on Humphreys and MGonz from Brian Christian’s fantastic book The Most Human Human:

Humphrys’s twist on the age-old chatbot paradigm of the “non-directive” conversationalist who lets the user do all the talking was to model his program, rather than on an attentive listener, on an abusive jerk. When it lacks any clear cue for what to say, MGonz falls back not on therapy clichés like “How does that make you feel?” or “Tell me more about that” but on things like “you are obviously an asshole,” “ok thats it im not talking to you any more,” or “ah type something interesting or shut up.” It’s a stroke of genius, because, as becomes painfully clear from reading the MGonz transcripts, argument is stateless.

Anyone who has spent any time on the internet will recognize the pattern and understand how a chatbot could have tricked so many people. The worst social media arguments quickly disconnect from history and become a competition between sides to tear apart whatever was said last. While we often point at the sad state of dialogue in those spaces, the reality is that arguments, at least the worst ones, almost always work this way. When people get particularly hurt, especially by someone they care about, it’s often because whatever was shared was lost in the heat of replying to the last statement.

As Christian concludes in his section on the bot, understanding this can be incredibly powerful. “Since reading the papers on MGonz, and its transcripts, I find myself much more able to constructively manage heated conversations. Aware of their stateless, knee-jerk character, I recognize that the terse remark I want to blurt has far more to do with some kind of ‘reflex’ to the very last sentence of the conversation than it does with either the actual issue at hand or the person I’m talking to.” Here’s to hoping. (NRB)

Product of the day:

WITI contributor Robert Spangle recently shared his excellent photos from Kabul before the fall in these pages. He’s also a really talented product designer. We’re obsessed with this bag from his Observer Collection. It is carry-on size and built to last forever. He also makes very good leather goods. For a more entry priced option, you can’t go wrong with this great passport wallet. (CJN)

Quick Links:

Peyton and Eli Manning doing a Monday Night Football broadcast together is great. (NRB)

A very brutal military test (CJN)

Thanks for reading,

Noah (NRB) & Colin (CJN)

—

Why is this interesting? is a daily email from Noah Brier & Colin Nagy (and friends!) about interesting things. If you’ve enjoyed this edition, please consider forwarding it to a friend. If you’re reading it for the first time, consider subscribing (it’s free!).

5