Noah here. Yesterday’s GPT-3 Edition brought to mind paperclips. Specifically, the possible dangers of a paperclip maximizing artificial intelligence. The idea comes from a thought experiment introduced by AI philosopher and existential threat worrier Nick Bostrom:

Imagine an artificial intelligence, [Bostrom] says, which decides to amass as many paperclips as possible. It devotes all its energy to acquiring paperclips, and to improving itself so that it can get paperclips in new ways, while resisting any attempt to divert it from this goal. Eventually it “starts transforming first all of Earth and then increasing portions of space into paperclip manufacturing facilities”. This apparently silly scenario is intended to make the serious point that AIs need not have human-like motives or psyches. They might be able to avoid some kinds of human error or bias while making other kinds of mistake, such as fixating on paperclips. And although their goals might seem innocuous to start with, they could prove dangerous if AIs were able to design their own successors and thus repeatedly improve themselves. Even a “fettered superintelligence”, running on an isolated computer, might persuade its human handlers to set it free. Advanced AI is not just another technology, Mr Bostrom argues, but poses an existential threat to humanity.

The paperclip maximizing AI is the 21st-century T-1000: a cautionary tale on technology run amok.

Why is this interesting?

While questions around the ethics of AI certainly haven’t gone away, thankfully most of the conversations around paperclip maximizing robots and trolley problems have been put aside for more urgent issues around bias and access to this new technology.

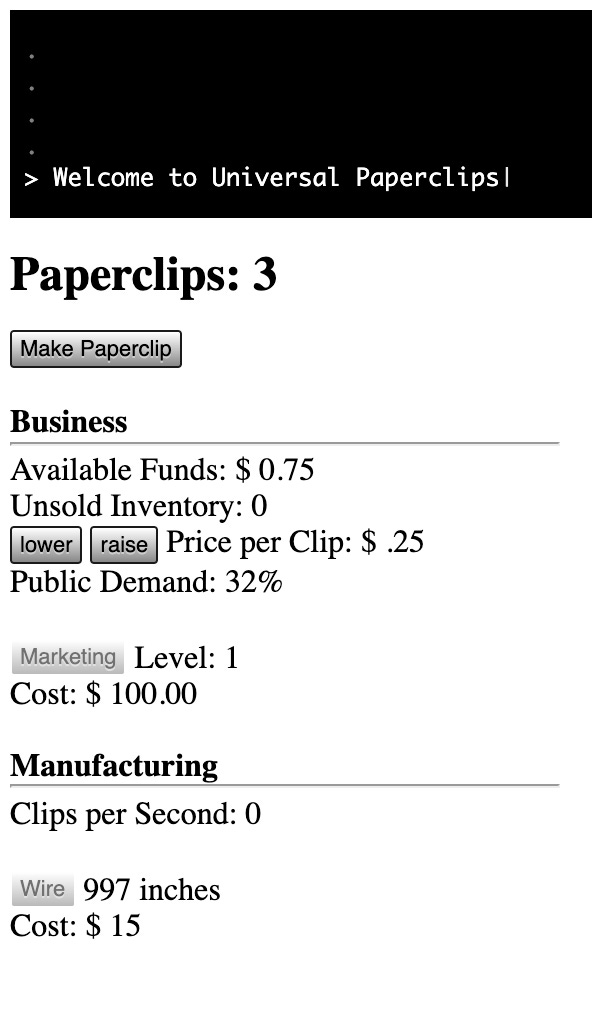

But today’s WITI isn’t really about ethical AI, it’s about what must be my favorite iOS game ever: Universal Paperclips (web version). The game is about as simple as anything you’ll find out there. There are no graphics and the only real interaction comes from tapping super simple buttons on the screen. You play the role of the AI, delivering on your singular life mission of making paperclips by any means necessary.

If all that sounds absurd, it is. But somehow it comes together to make one of the most interesting and addictive games I’ve ever played. I’ve got a handful of friends who curse me to this day for introducing them to NYU Game Center professor Frank Lantz’s strange masterpiece. Here’s how John Brindle described it when it came out:

From the start, Paperclips doesn’t shy away from the fact that optimization can be unpleasant as well as fun. “When you play a game,” says Lantz, “especially a game that is addictive and that you find yourself pulled into, it really does give you direct, first-hand experience of what it means to be fully compelled by an arbitrary goal.” Clickers are pure itch-scratching videogame junk—what Nick Reuben calls “the gamification of nothing”—so they generate conflicting affects: satisfaction and fatigue, curiosity and numbness. Paperclips leans fully into that ambivalence. This is a kind of horror game about how optimization could actually destroy the universe, turning mechanics which in other clickers enable a journey of joyful discovery towards genocidal destruction. You feel slightly scared by what you’re doing even as you cackle at its audacity.

I won’t say much more, as I don’t want to ruin any of the fun except that it’s a fascinating journey on the power of exponentials and a lesson in how every company becomes a finance company. My only suggestion is that if you decide to try your hand at paperclip making, make sure your calendar is free. (NRB)

Quick Links:

Big oil influencers (CJN)

Amazing shark footage (CJN)

Biden, Putin and the new era of information warfare (CJN)

Thanks for reading,

Noah (NRB) & Colin (CJN)

—

Why is this interesting? is a daily email from Noah Brier & Colin Nagy (and friends!) about interesting things. If you’ve enjoyed this edition, please consider forwarding it to a friend. If you’re reading it for the first time, consider subscribing (it’s free!).

Thanks for this time suck... Lost a few hours this afternoon

I was first introduced to Universal Paperclips several years ago (2018?) . It is indeed a lot of fun, very time consuming, and an excellent example of the "gamification" of a philosophical concept